Slides from my talk at the Software Cornwall Business Connect 2017 Event on ‘TDD Driving Quality’.

Tag Archives: TDD

Agile on the Beach 2016

Slides from my talk at Agile on the Beach 2016 on “How we implemented TDD in Embedded C & C++“

Embedded TDD

This post is still work in progress. It is the basis of a conference presentation I am planning for NDC2015.

Abstract

With the ever increasing complexity of software the need to use development practices like TDD is becoming more and more important. Embedded software development presents an extra set of challenges when practicing TDD. Hardware is often still in development, expensive or has limited availability. Deploying to the target device takes a long time. The target device has limited program space and RAM. For these reasons testing of embedded software is often performed manually (if at all).

There are a large number of papers and articles that focus on TDD for systems without the added complexities of working in an embedded environment. We’ve been developing embedded software using TDD (and a variety of other Agile techniques) for the past 6 years. This paper presents some of the patterns and practices that help us deal with some of the extra complications incurred when practicing TDD in an embedded environment. It covers how we keep the feedback from our tests fast while still running tests on the target hardware. How we mock out dependencies and discusses the advantages and disadvantages to the techniques shown.

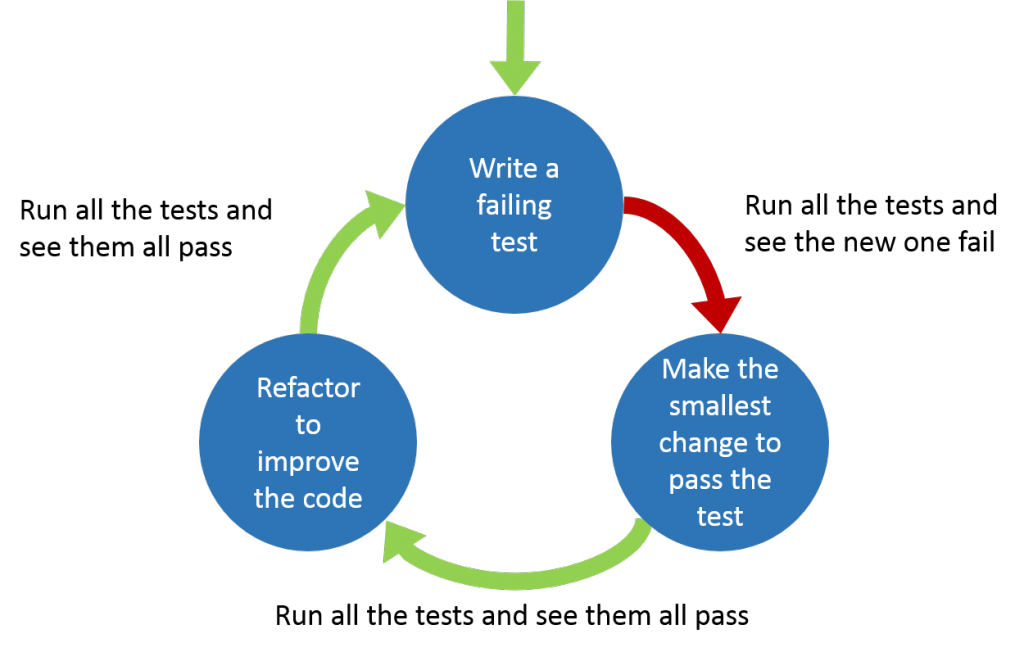

The TDD Cycle

The standard TDD cycle is shown below

TDD tests should be developed FIRST.

- Fast – The tests should be fast to execute. Both fast to execute individual tests and fast for the overall test run.

- Independent/Isolated – The tests should not fail because of external factors. The test does not depend on other tests, they can run in any order.

- Repeatable – Tests must return the same result every time they run, they should not have intermittent results.

- Self-validating – There should be no need for human intervention to determine if a test passes or fails.

- Timely – The tests should be written before the code.

Testing Strategy

Testing on the Target Device

Running tests on the target device is the obvious choice but it’s not without its problems.

Advantages

- Accurate test results (compared to possible issues with running the tests on the host).

Disadvantages

- Slower feedback

- Programming the target device can be slow

- The target device is often not fast when compared to modern PCs so the tests will run more slowly

- Transferring the test results back to the development platform can be slow depending on the method used

- This will slow down your development process

- Make you run test less often, leading to bigger changes and more mistakes and missed execution paths

- Limited code space and RAM

- The tests and the test framework are going to be at least the size of your code if not larger.

- More on ways to overcome this later

- You need target hardware to run the tests

- Limited hardware – not enough for every development pair

- Often expensive

- Sometimes broken

Testing on the Development Platform

Running tests on the development platform solves most (if not all) of the problems encountered with testing on the target platform but introduces some new problems.

Advantages

- Fast feedback

- No code space and/or RAM issues

- No need for target hardware

Disadvantages

- The development platform and target platform are most likely different. There will be differences between the target system and development system (e.g. sizeof(int)). These differences mean some issues will only happen on the target device and you will not detect these on the host.

- Able to write code that may not compile when using the compiler for the target

Dual Targeting

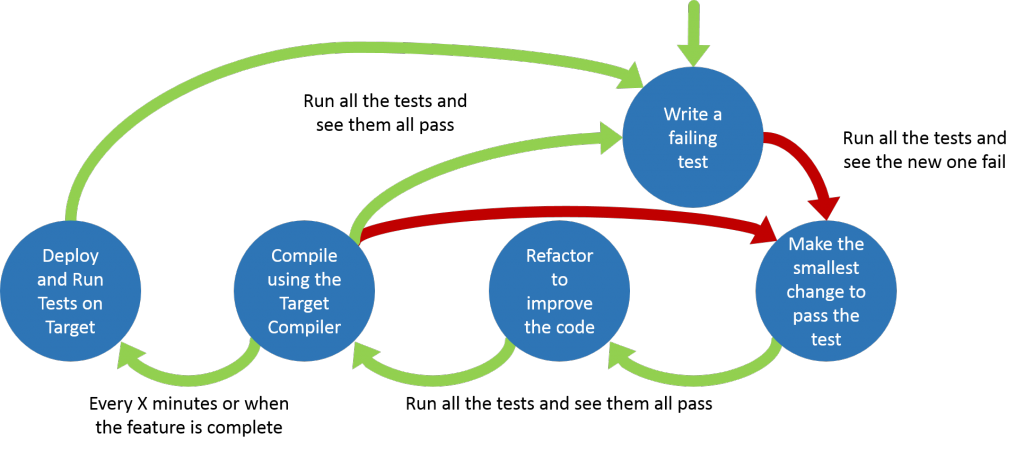

Takes the advantages of both ‘Testing on the Development Platform’ and ‘Testing on the Target Device’ and minimises the disadvantages.

Dual targeting extends the standard TDD cycle

- Red Green Refactor

- After every passing test compile for target to make sure you can! (This checks you haven’t used any unsupported features of the embedded compiler.) Fix any issues encountered.

- Every 15 minutes (or when you’ve finished a logical set of tests) run the tests on the target device and fix any issues encountered.

- More on how to do this with limited hardware availability later

Advantages

- Fast feedback

- More portable code

- Compiling on two different compilers increases the chances of catching issues. (Different compilers given different warnings)

Disadvantages

- Maintaining two builds

- This can be minimised if you can use the same build system and just switch the compiler and linker

Splitting the problem

How you split you code up is going to determine how easy or difficult it is to test.

- Use a modular approach

- Stick to SOLID principles

- Low Coupling

- Thin outer (low level) layer that isn’t tested

Using these principles will help develop a design which is both low coupled and easily testable.

Don’t add extra methods/function just to facilitate testing, the tests should help develop the interface to the code.

Architectural Layers

Application code (Hardware independent) [Easier to test]

Hardware Aware Code

PCB Drivers + HAL/BSP

Processor Drivers + HAL [Harder to test]

The further down the layers (closer to the hardware) you test the harder testing gets.

Mocking

Techniques

- Run time substitution

- Interface (C++)

- Inheritance (C++)

- VTable (C)

- Link time

- Linking other object files (C/C++)

- Weak leaking functions (C)

- Compile time

- Macros (C/C++)

- Templates (C++)

Advantages

- Testing drivers

- Testing error cases from hardware

- Developing before the hardware is ready

Choosing a test framework – consider

- Size of framework

- Portability

- Ease of use

- Options

- Unity

- Embedded Unit

- Google Test

Other

- Integration tests to check integration between classes that had one of them mocked

- Integration tests that check hardware interaction

- Only run on Target

- Using Build/CI servers to run unit tests on hardware

- Polymorphic tests

- Consider what a unit is